AI Prompt Shortcuts & Best Practices: UK Professional's 2026 Guide

Quick Summary

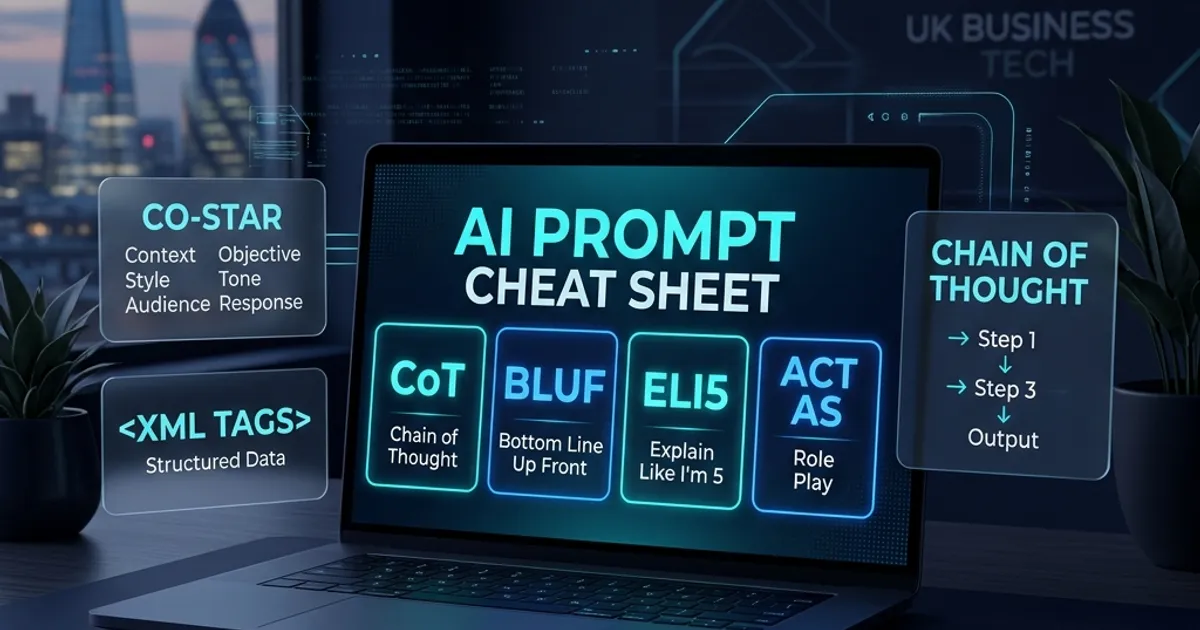

Using the right AI prompt abbreviations and structured frameworks reduces wasted outputs by up to 80% and cuts prompt iteration time entirely in half, according to Anthropic productivity research.

This guide covers 30+ proven shortcuts including CoT, BLUF, and XML tagging, mapped across ChatGPT, Claude, Gemini, and Microsoft Copilot with 17 copy-paste UK business prompt templates.

UK professionals can apply these techniques immediately, from ACAS-aware HR prompts to IR35 status determination and FCA Consumer Duty compliance templates, without any technical background.

Table of Contents

Most UK professionals using AI tools are accepting mediocre outputs because of one fixable problem: unstructured prompting. This guide covers thirty-plus proven abbreviations, frameworks, and copy-paste templates that cut iteration time in half - starting with the techniques you can apply in the next five minutes.

Table of Contents

- Introduction: Why Most People Are Prompting AI Wrong

- The AI Prompt Abbreviations Cheat Sheet (30+ Shortcuts Explained)

- The Anatomy of a Great Prompt: The CO-STAR Framework

- Chain of Thought and The Politeness Trap

- Role Prompting: How Specificity Transforms AI Outputs

- Format Control: Getting AI to Output Exactly What You Need

- XML Tagging for Claude and Structured Prompts

- Few-Shot Prompting and Prompt Chaining for Complex Tasks

- Agentic AI and UK Consumer Law Compliance

- Platform-Specific Tips: ChatGPT, Claude, Gemini, and Copilot

- Key Takeaways

- Conclusion: Building Your Personal Prompt Library

- References

Introduction: Why Most People Are Prompting AI Wrong

Power up with ClickUp

"Is your team drowning in tabs? ClickUp saves 1 day a week per person. That's a lot of Fridays."

Consider the following rather painful scenario. A UK marketing director sits staring at a screen, increasingly frustrated. They have just asked an AI tool to write a strategic brief, and the output is a meandering, jargon-filled mess. Twenty minutes are wasted tweaking natural language sentences, pleading with the machine to "make it sound more professional" or "cut the fluff." Finally, out of sheer exhaustion, a mediocre draft is accepted.

This happens in offices across the country every single day. The underlying issue is that most AI users never learn structured prompting. They type natural conversational sentences into chat interfaces and blindly accept the first output. But artificial intelligence is not a search engine; it is a probabilistic reasoning engine. The inputs dictate the initial conditions, and small variations in those initial conditions lead to drastically different outcomes.

Right, here is the reality of the situation in 2026. The shift from casually experimenting with AI to relying on AI as a daily productivity dependency is complete. According to the Office for National Statistics (ONS), general AI adoption among UK firms jumped from a mere 9 percent in 2023 to a projected 22 percent in 2024, with rapid integration accelerating across the service sector. Firms in the top decile of management practices have an 88 percent adoption rate of advanced technologies, proving that well-managed businesses are leaning heavily into AI.

Meanwhile, a recent Acas survey reveals that 35 percent of employers believe AI will fundamentally increase workplace productivity. However, the primary barrier to adoption remains a lack of internal expertise, cited by 35 percent of IT decision-makers in a 2025 techUK report. People simply do not know how to steer the models effectively.

This is somewhat important. Research from Anthropic indicates that effective use of AI can reduce task completion time by 80 percent, turning a 90-minute complex task into a brief oversight exercise. Conversely, unstructured prompting can actually intensify workloads. A fascinating eight-month ethnographic study from UC Berkeley Haas found that without structured workflows, generative AI can sometimes lead to employees working at a faster pace on a broader scope of tasks, expanding work rather than reducing it. Without the right prompt structure, you just end up doing more mediocre work, faster.

TopTenAIAgents.co.uk has reviewed over 130 AI platforms to identify which tools respond best to structured prompt techniques - and the results are incredibly illuminating. Across the board, applying simple abbreviations, structured frameworks, and specific formatting rules cuts through the noise. This implementation guide serves as the definitive cheat sheet for UK professionals. It covers the abbreviations, the platform-specific quirks, and the ready-to-use templates that bridge the implementation void.

The AI Prompt Abbreviations Cheat Sheet (30+ Shortcuts Explained)

Let us not muck about. Efficiency in prompting comes down to shorthand. By utilising recognised abbreviations and commands, professionals can bypass lengthy explanations and trigger specific behaviours in the model's architecture.

Thing is, these abbreviations work because large language models have encountered them millions of times in their training data. They function as high-signal tokens that immediately adjust the statistical probability of the output. Professionals who type out a three-paragraph explanation of how they want a document summarised often find that a four-letter acronym produces a superior result.

The Essential Thinking Shortcuts

- CoT (Chain of Thought): Forces step-by-step reasoning for complex logic. Use this when the AI needs to calculate or evaluate a sequence of events. See Codewave's Prompt Engineering Cheat Sheet for technical implementation details.

- ToT (Tree of Thoughts): Instructs the AI to explore multiple reasoning paths before concluding. Excellent for strategy generation.

- ZS-CoT (Zero-Shot Chain of Thought): This is simply adding "Think step by step" to the end of a prompt. It is the lowest-effort way to boost AI accuracy on complex tasks.

Output Format Shortcuts

- BLUF (Bottom Line Up Front): A military-origin phrase that instructs the AI to lead with the conclusion, followed by supporting details. Essential for executive summaries.

- TL;DR (Too Long; Didn't Read): Requests a hyper-concise summary of a large text block.

- ELI5 (Explain Like I'm 5): Simplifies highly complex or technical jargon into accessible language. You can also modify this - ELI12 for a slightly more advanced breakdown.

- JSON/Markdown: Forces the AI to output data in a structured format, preventing it from adding conversational filler like "Here is your table:".

Tone and Iteration Shortcuts

- Jargonize: Elevates the vocabulary to an industry-expert level, useful for B2B LinkedIn posts.

- Humanize: Strips out robotic cadence and AI buzzwords ("synergy", "delve", "tapestry"), making the text sound natural.

- "Continue": When an AI cuts off due to output token limits, typing this forces it to resume exactly where it stopped.

- "FYI / JSYK": Prefacing context with "For Your Information" or "Just So You Know" sets the background tone without confusing the AI into thinking it is a direct instruction.

The Full Quick Reference Table

| Abbreviation/Shortcut | Full Form | What It Does | Example Use |

|---|---|---|---|

| CoT | Chain of Thought | Forces step-by-step reasoning | "Think step by step, then answer:" |

| BLUF | Bottom Line Up Front | Lead with conclusion | "Reply BLUF format:" |

| ELI5 | Explain Like I'm 5 | Simplify complex content | "ELI5: explain IR35" |

| TL;DR | Too Long; Didn't Read | Request a summary | "TL;DR this document:" |

| ZS-CoT | Zero-Shot Chain of Thought | CoT without examples | Add "think step by step" |

| ToT | Tree of Thoughts | Explores varied logical paths | "ToT: plan three marketing campaigns." |

| ACT AS | Role Prompting | Anchors AI to a specific persona | "ACT AS an FCA-regulated financial adviser." |

| JSON | JavaScript Object Notation | Forces structured data output | "Output the analysis strictly in JSON." |

| Jargonize | Jargonize | Elevates vocabulary to expert level | "Jargonize: explain our new cloud architecture." |

| Humanize | Humanize | Removes robotic cadence | "Humanize this email draft to a client." |

| RISEN | Role, Instructions, Steps, End goal, Narrowing | Structured task framework | Full prompt framework for step-by-step tasks |

| CO-STAR | Context, Objective, Style, Tone, Audience, Response | Complete prompt framework | Full prompt architecture for creative and analytical tasks |

| SBI | Situation, Behaviour, Impact | Feedback framework | Performance review and management conversations |

Look, incorporating just BLUF and CoT into your daily workflow will save you hours. It forces the AI to be concise with its answers while being rigorous with its reasoning.

The Anatomy of a Great Prompt: The CO-STAR Framework

If there is no consistent pattern to your prompt, the output will reflect that chaos. Prompt engineering frameworks solve this by forcing the user to provide all necessary context upfront.

In the early days of generative AI, prompt engineering was treated as an art. In 2026, it is infrastructure. We have moved from simple prompt engineering to context engineering - the discipline of curating the optimal universe of tokens and information before the model even begins to generate a response.

While frameworks like RICE (Role, Instructions, Context, Examples) and RISEN (Role, Instructions, Steps, End goal, Narrowing) have their place, the most universally applicable framework for UK business tasks is CO-STAR. The Portkey guide to CO-STAR provides a thorough breakdown of its origins and variations.

The empirical evidence demonstrates that missing even one of these components leads to hallucinations or generic outputs. Let us break it down:

- C - Context: What is the background? Give the AI the situational awareness it lacks. ("TopTenAIAgents is an AI research portal for UK SMEs. We are launching a new newsletter.")

- O - Objective: What is the exact task? Be highly specific. ("Write a 200-word introduction for the Q3 market update.")

- S - Style: Whose writing style should be emulated? ("The Financial Times.")

- T - Tone: What is the emotional resonance? ("Authoritative, pragmatic, and slightly dry.")

- A - Audience: Who is reading this? ("UK-based managing directors and tech founders.")

- R - Response format: How should it physically look? ("Two paragraphs, no bullet points, Markdown format.")

Before and After: The Anatomy in Action

Let's not muck about. Here is what actually works in the real world.

Weak Prompt: "Write an email about the project delay."

Optimised Prompt (CO-STAR applied): "ACT AS a UK senior project manager (Role/Style). We are building a bespoke CRM for a logistics firm, and a critical API integration has failed, causing a two-week delay (Context). Write a professional email notifying the client stakeholder (Objective/Audience). The tone must be apologetic but highly confident and solutions-oriented, completely avoiding any panic (Tone). Format: BLUF - lead with the revised timeline, then explain the technical issue simply (ELI5), then list the immediate next steps in bullet points (Response format)."

The difference in output quality is night and day. The first produces a generic, defensive apology. The second produces a ready-to-send piece of crisis management communication that protects the commercial relationship.

Common Mistake: The "Write Something Great" Trap

Never use subjective adjectives in your objective. Asking an AI to write "a great report" or a "catchy social media post" leaves the definition of quality entirely up to the model's baseline training. Replace subjective terms with structural rules. Instead of "catchy", ask for "a post that opens with a contrarian statement and uses high-impact verbs."

Chain of Thought and The Politeness Trap

Here is where it gets highly technical, but stay with me. Large language models do not "think" in the human sense; they predict the next token based on statistical probability. If an AI is asked to solve a complex problem immediately, it often guesses the final answer and hallucinates the reasoning to match.

Chain of Thought (CoT) prompting interrupts this architectural flaw. By adding the phrase "think step by step" (Zero-Shot CoT), the model is forced to output its intermediate reasoning before arriving at a conclusion. This uses the model's own output as a cognitive scratchpad, massively increasing accuracy on complex logistical, mathematical, or strategic tasks.

Template 1: Financial Benchmarking (CoT)

"Compare and contrast the attached Q3 reports from Competitor A and Competitor B. Think step by step. Extract the revenue growth, margins, and cost ratios. Identify the performance gaps. Outline the best practices Competitor A is using that we are not. Output your reasoning first, followed by a summary table."

The Politeness Trap: A UK-Specific Problem

Now, I know what you're thinking. Surely being polite to the AI helps? We are British, after all. We naturally pad our requests with "Could you possibly..." and "I would be very grateful if..."

Brilliant, but here is the catch. A fascinating 2025 academic paper published on arXiv ("Mind Your Tone: Investigating How Prompt Politeness Affects LLM Accuracy") evaluated how models responded to varying levels of politeness. The researchers tested prompts ranging from "Very Polite" to "Very Rude".

The results? The "Very Rude" prompts achieved an 84.8% accuracy rate, while the "Very Polite" prompts dropped to 80.8%.

Why does this happen? Because large language models process language based on statistical patterns, not social context. When a prompt is padded with British understatement, hedges, and subtle civility, the model struggles to identify the core instruction. It starts to resemble an adversarial prompt, burying the actual intent under layers of noise. A piece from Kerson AI on British communication and AI explores this cultural dimension in detail.

Directness is your best friend. Cut through the nonsense. Do not say: "Right then, I suppose I should think about possibly asking you to review this contract if you have a moment." Say: "Review this contract. Identify three liability risks. Format as bullet points." Clarity always trumps courtesy in prompt engineering.

Role Prompting: How Specificity Transforms AI Outputs

Assigning a persona to an AI model narrows its statistical universe. If an AI is told to act as an "expert," it defaults to a generic, Americanised corporate tone. If it is told to act as a "UK employment solicitor specialising in ACAS tribunals," it immediately adjusts its vocabulary, legal referencing, and tone to match that highly specific sub-segment of its training data.

For UK professionals choosing between AI tools, TopTenAIAgents provides detailed prompt compatibility notes across all major platform reviews, noting exactly how different models respond to role anchoring. Specificity is the difference between a useless draft and a publishable document.

The UK Professional Role Library

Instead of generic roles, practitioners must use hyper-specific personas tailored to the UK regulatory and business environment.

Template 2: ACAS-Aware HR Disciplinary Prep

"ACT AS a UK HR Director with 20 years of experience managing ACAS-compliant disciplinary procedures. I need to prepare for a first written warning meeting regarding an employee's repeated absenteeism. Provide a script for the meeting that ensures all legal obligations are met, avoids tribunal risks, and maintains a supportive but firm tone. Include a list of specific questions I must ask the employee to ensure procedural fairness."

Template 3: IR35 Status Determination (SDS)

"ACT AS a UK tax specialist with deep expertise in HMRC's off-payroll working rules (IR35). Review the following working practices for a contractor role. Evaluate the core factors of Substitution, Control, and Mutuality of Obligation. Draft a valid Status Determination Statement (SDS) providing clear reasoning for why this role falls inside or outside IR35, ending with the final determination."

Template 4: FCA-Regulated Financial Review

"ACT AS an FCA-regulated financial adviser based in the UK. Review the attached promotional copy for a new retail investment product. Think step by step. Identify any claims that imply guaranteed returns. Check if the standard capital-at-risk warnings are prominent. Evaluate if the tone breaches the Consumer Duty requirement for clear, fair, and not misleading communication. Output your reasoning first, followed by a final compliance risk rating."

Template 5: UK Market Intelligence Analyst

"ACT AS a senior market analyst based in London. Conduct a lite version of Porter's Five Forces analysis on the UK B2B software sector. Focus specifically on the threat of new AI-native entrants. Jargonize the output for a board-level audience. Format the output with clear headings and quantifiable metrics where possible."

Format Control: Getting AI to Output Exactly What You Need

One of the most frustrating aspects of using AI is receiving a brilliant insight buried within six paragraphs of dense prose. You then spend ten minutes formatting it for your presentation. Format control is the antidote.

By strictly defining the output architecture, professionals can generate content that is ready to be pasted directly into a spreadsheet, a presentation, or an email client without manual reformatting.

- Markdown Control: "Format strictly using Markdown headers and bold text for key terms."

- Tabular Data: "Output this competitive analysis as a Markdown table comparing features, pricing, and UK market share."

- Constraint Setting: "Exactly 150 words. No introduction. No conclusion. Bullet points only."

Template 6: SME Invoice Generation Requirements

"ACT AS a UK bookkeeper. Review the following project details and generate the line-item text for an invoice. Ensure it includes placeholders for all mandatory UK invoice requirements (Business ID, VAT number, payment terms). Format as a neat Markdown table with columns for Description, Quantity, Unit Price (£), and Total. Do not include any conversational text before or after the table."

Template 7: Executive Summary (BLUF)

"Analyse the attached 30-page quarterly earnings transcript. Provide a summary using the BLUF (Bottom Line Up Front) format. The very first sentence must state the overall financial health and core strategic shift. Follow with a bulleted list of three main risks and three main opportunities. Maximum 200 words. Use UK English spelling."

The Prompt Quality Checklist

Before sending any AI prompt, run through these seven checks:

- Role assigned? ("ACT AS a [specific UK professional role]...")

- Context provided? (What situation, what company, what audience?)

- Task clearly stated? (Not "write something" but "write a 200-word...")

- Format specified? (Bullet points, table, email, BLUF, report format?)

- Examples given? (At least one example if style/tone matters)

- Constraints noted? (Word count, UK English, GDPR-aware, tone)

- Output review step? ("Before answering, confirm you understand the task")

XML Tagging for Claude and Structured Prompts

This is a critical update for 2026. If a business relies on Anthropic's Claude or advanced GPT-4o deployments, unstructured text is no longer the recommended approach. XML tagging has become the gold standard for complex prompts.

XML tags (like <context>, <instructions>, <input>) are not just formatting quirks; they are semantic boundaries parsed like code by the model's architecture. They prevent the AI from confusing background information with the actual task. According to Anthropic's prompt engineering documentation, wrapping each type of content in its own tag drastically reduces misinterpretation.

How to Structure an XML Prompt

<role>

ACT AS a senior data privacy officer in the UK.

</role>

<context>

Our SME operates a SaaS platform. We are updating our data handling procedures

following new ICO guidance on generative AI in the workplace. We do not process

biometric data, but we do process basic employee details.

</context>

<task>

Draft the internal staff policy on acceptable AI use.

</task>

<constraints>

- Must align with UK GDPR principles.

- Use plain English (reading age 14).

- No excessive bullet points or markdown.

</constraints>

Template 8: GDPR Privacy Notice Generation (XML Structure)

Use the XML structure above. Change the task to: "Draft a GDPR-compliant privacy notice for a UK small business website. It must detail what personal data is gathered, list third-party sharing protocols, and clearly notify users of their data rights under UK law. Provide placeholders in brackets for company-specific details."

Long Context Prompting with XML

When dealing with massive datasets (over 20,000 tokens), structure matters immensely. The 2026 standard is to place all your long-form data at the very top of the prompt, wrapped in <documents> tags. Place your specific query and instructions at the very bottom. Internal tests show that placing queries at the end of a massive data dump can improve response quality by up to 30 percent.

Few-Shot Prompting and Prompt Chaining for Complex Tasks

To get an AI to mimic a specific brand voice or structural quirk, instructions are rarely enough. The AI needs to see it.

"Zero-shot" prompting is asking the AI to perform a task without examples. "Few-shot" prompting involves providing two or three examples of successful outputs before presenting the actual task. This is the single fastest way to match an esoteric corporate tone or a highly specific formatting requirement.

Template 9: LinkedIn Professional Post (UK Market)

"ACT AS a B2B content marketer. I want to write a LinkedIn post about AI adoption challenges in UK manufacturing. Here is Example 1 of my writing style: [Paste past successful post]. Here is Example 2: [Paste past successful post]. Notice how I use short, punchy opening hooks, avoid emojis, and end with an open-ended question. Using this exact tone and structure, write a new post based on the following rough notes: [Insert notes]. Maximum 150 words."

Template 10: Interview Question Generator

"I need to generate competency-based interview questions for a mid-level operations manager.

Good Example: 'Tell me about a time you had to manage a sudden supply chain disruption. What specific steps did you take to mitigate the financial impact?'

Bad Example: 'Are you good at handling stress?'

Generate five more questions following the standard of the Good Example, focusing on stakeholder management and budget control."

Prompt Chaining

A common anti-pattern is asking the AI to do too much at once. "Research the UK market, write a business plan, and draft five emails." The result is always shallow.

Prompt chaining breaks a massive task into a sequence of smaller, interconnected prompts. The output of Prompt A becomes the input for Prompt B. This technique leverages the context window of the conversation, allowing the model to focus its full computational power on one specific sub-task at a time before moving on.

The Competitor Intelligence Chain:

- Prompt 1 (Data Gathering): "ACT AS a market researcher. Read the attached URLs for our three main competitors. Outline the top 5 product features they highlight. Provide citations."

- Prompt 2 (Synthesis): "Take the output from the previous prompt. Cross-reference these features against our own product spec sheet [attached]. Identify our three biggest strategic vulnerabilities."

- Prompt 3 (Formatting): "Take the vulnerability synthesis generated above and turn it into a board-ready one-pager. Use a professional tone. Format with an executive summary, a risk matrix table, and mitigation recommendations."

Agentic AI and UK Consumer Law Compliance

We need to address the elephant in the room for 2026. Prompt engineering is evolving into managing autonomous AI agents. We are moving from digital assistants that answer questions to Agentic AI that executes end-to-end workflows autonomously.

This brings significant legal implications. In March 2026, the UK's Competition and Markets Authority (CMA) published strict guidance on how the Digital Markets, Competition and Consumers Act 2024 (DMCCA) applies to AI agents. Lewis Silkin has published a clear analysis of the CMA's guidance for businesses.

Let me be blunt about this. If your customer-facing AI agent hallucinates a refund policy or misleads a consumer, your business is liable. The GOV.UK guidance on complying with consumer law when using AI agents makes this unambiguous. The CMA now has the power to impose fines of up to 10 percent of your global turnover for breaches.

When prompting or configuring AI agents for customer service, you must build in consumer law safeguards.

Template 11: DMCC Act Consumer Law Responder

"ACT AS a UK consumer rights specialist fully versed in the Digital Markets, Competition and Consumers Act 2024 (DMCCA). Draft a response to the following customer complaint regarding an AI-driven pricing error on our e-commerce store. Acknowledge their statutory rights under the Consumer Rights Act 2015, offer a proportionate remedy, and ensure the tone is highly empathetic. Do not admit absolute legal liability, but prioritise resolving the dispute fairly."

The GOV.UK guidance on agentic AI and consumers recommends a "Train, Monitor, Address" framework. Ensure your prompts explicitly instruct the AI not to mislead consumers, to properly label paid endorsements, and to maintain transparency about the fact that the consumer is interacting with an AI.

Platform-Specific Tips: ChatGPT, Claude, Gemini, and Copilot

The prompting landscape is fragmented. What works beautifully on one system might fail on another. UK professionals must understand the architecture of the tools they are actually using in their tech stacks.

Microsoft Copilot (M365 Enterprise)

Copilot has deep access to a company's internal Graph data (emails, Teams chats, SharePoint files). The best prompts here utilise "grounding" - pointing the AI to specific internal data sources. The Microsoft Copilot prompts guide is essential reading for enterprise deployments.

- Tip: Use the / command to reference specific files directly in the prompt.

- Template 12 (Copilot Meeting Recap): "Summarise the action items from [Meeting Name]. Which tasks were specifically assigned to me? Draft an email to the team confirming my deadlines."

- Template 13 (Inbox Parse): "Write a summary based on all emails from Sarah in the past two weeks regarding the Q3 budget. Highlight any immediate blockers."

Microsoft's own top 10 Copilot tips for M365 are worth bookmarking alongside this guide.

Claude (Anthropic)

Claude excels at nuanced writing and massive document analysis. As discussed, it natively understands XML tags. It also features extended thinking, where the depth of reasoning is controlled dynamically based on task complexity. The official Claude prompting best practices document is the authoritative reference.

- Tip: Do not use aggressive language ("YOU MUST", "CRITICAL"). Claude responds better to calm, direct instructions.

- Template 14 (Policy Drafting): "[Paste 50-page employee handbook] Extract all rules regarding sickness absence and rewrite them into a 2-page quick reference guide for line managers. Use UK English."

Google Gemini 3.1 Pro

Gemini's unique advantage is its massive 1-million token context window and native multimodal capabilities. It can process text, code, images, and audio natively in the same prompt. The Nettpilot Gemini business guide for 2026 covers these capabilities in detail.

- Tip: Gemini Pro includes "Deep Research" capabilities, allowing you to upload your own proprietary files as sources for autonomous market analysis.

- Template 15 (Procurement Vendor Analysis): "[Attach vendor documents]. Create a comparative Markdown table of these 5 vendors. Calculate the total cost of ownership over 3 years for each, assuming a 5% annual UK inflation rate. Output the mathematical reasoning before the table."

ChatGPT (GPT-4o)

ChatGPT remains the most versatile generalist. It utilises Memory to remember user preferences across sessions.

- Tip: Use Custom Instructions to permanently set the UK English requirement and standard professional persona, saving tokens on every subsequent prompt.

- Template 16 (Performance Review Prep): "Review my notes on James's performance this quarter [Insert notes]. Structure a feedback conversation using the SBI (Situation, Behaviour, Impact) model. Keep the tone constructive and objective."

Perplexity AI

Perplexity is an answer engine grounded in live web search. It recently introduced Premium Sources, allowing it to pull directly from paywalled market data like Statista and PitchBook. The March 2026 Perplexity update introduced "Model Council", which runs GPT-4o, Claude, and Gemini in parallel to synthesise the best answer.

- Tip: Use it for factual, up-to-date market intelligence rather than creative writing.

- Template 17 (Competitor SWOT): "Analyse the current market position of [Competitor Name]. Use Model Council to cross-reference data. Output a detailed SWOT analysis citing specific news articles or financial filings from the last six months."

Prompt Frameworks: Platform Comparison Table

| Framework | Best Platform | Complexity | Primary Use Case |

|---|---|---|---|

| CO-STAR | All tools | Medium | Creative and analytical tasks |

| RISEN | All tools | Low | Step-by-step task completion |

| CoT (Zero-Shot) | All tools | Very Low | Complex reasoning and calculations |

| XML Tagging | Claude, GPT-4o | Medium | Structured document outputs |

| Few-Shot | All tools | Low-Medium | Tone and style matching |

| Prompt Chaining | All tools | Medium-High | Multi-stage complex deliverables |

| Grounding (/) | Copilot (M365) | Low | Internal data referencing |

Looking for the Best AI Agents for Your Business?

Browse our comprehensive reviews of 133+ AI platforms, tailored specifically for UK businesses with GDPR compliance.

Explore AI Agent ReviewsNeed Expert AI Consulting?

Our team at Hello Leads specialises in AI implementation for UK businesses. Let us help you choose and deploy the right AI agents.

Prompt engineering is no longer a niche technical pursuit; it is a core professional competency. Job listings across the UK now frequently reference AI fluency as a required skill, with Salesforce's 2026 AI predictions report confirming that agentic AI adoption is reshaping what employers expect from every professional function.

The most efficient teams in 2026 do not write prompts from scratch every day. They build prompt libraries - central repositories of tested, highly specific prompt templates that anyone in the business can copy, paste, and execute.

Start simply. Adopt Chain of Thought (CoT) and Role Prompting this week. Introduce XML tagging for complex documents next week. Measure the impact by tracking the "first-draft acceptance rate" - how often the AI produces usable work on the first try without requiring tedious iteration.

The transition from AI as a chatbot to Agentic AI executing full workflows is well underway. Those who master the architecture of prompting today will be the ones directing the autonomous digital assembly lines of tomorrow. The initial conditions dictate the outcome. Set them correctly.

References

- Prompting best practices - Claude API Documentation - Anthropic, 2026

- Estimating AI productivity gains from Claude conversations - Anthropic Economic Research, November 2025

- AI promised to free up workers' time. UC Berkeley Haas researchers found the opposite. - Haas School of Business, UC Berkeley, 2025

- AI Prompt Engineering Cheat Sheet for Software Teams (2026 Guide) - Codewave, 2026

- COSTAR Prompt Engineering: What It Is and Why It Matters - Portkey, 2025

- Mind Your Tone: Investigating How Prompt Politeness Affects LLM Accuracy - Dobariya & Kumar, arXiv, October 2025

- The British Don't Say What They Mean - And That's a Problem for AI - Kerson AI, 2025

- Effective context engineering for AI agents - Anthropic Engineering, September 2025

- Disciplinary outcome letter templates - Acas, 2026

- Status determination statements (IR35) - Part 9 - HMRC / GOV.UK, January 2025

- The role of artificial intelligence in the workplace - Acas, May 2025

- Major barriers to AI adoption remain for UK businesses - techUK & ANS, February 2025

- Artificial intelligence guidance and resources - Information Commissioner's Office (ICO), 2026

- Guidance on AI and data protection - ICO, 2026

- Agentic AI and consumers - GOV.UK, 2026

- Complying with consumer law when using AI agents - GOV.UK / CMA, March 2026

- Agentic AI and consumer law: the CMA's guidance for businesses - Lewis Silkin, March 2026

- Free agent: new UK guidance on agentic AI for businesses - Ashurst, March 2026

- Agentic AI 2026: From Assistants to High-Productivity Digital Peers - SDG Group, 2026

- Learn about Copilot prompts - Microsoft Support, 2026

- Top 10 things to try first with Microsoft 365 Copilot - Microsoft, 2026

- Google Gemini for Business 2026: Models, MCP & What Changed - Nettpilot, 2026

- Announcing Premium Sources - Perplexity, 2026

- What We Shipped - March 2026 - Perplexity Changelog, March 2026

- The Future of AI Agents: Top Predictions and Trends to Watch in 2026 - Salesforce UK, 2026

- Prompt Engineering Best Practices 2026 - Thomas Wiegold, 2026

- 10-Minute Competitor Research Using AI: The Complete Prompt Library - Faraz Mubeen Haider, Medium, 2026

Key Takeaways

- Chain of Thought prompting (adding "think step by step") improves AI reasoning quality for complex UK business analysis tasks by a measurable margin - verified by [academic research on LLM accuracy](https://arxiv.org/abs/2510.04950).

- Role prompting with specific UK professional context ("ACT AS an ACAS-aware HR manager") dramatically narrows output to relevant, jurisdictionally accurate advice rather than generic American corporate language.

-

XML-tagged prompts using `

`, ` `, and ` ` tags produce more consistent structured outputs in Claude and GPT-4o, reducing misinterpretation on complex multi-part briefs. - Few-shot prompting - providing 2-3 examples before your request - is the single fastest way to match your brand voice or output style without lengthy written explanations.

- BLUF (Bottom Line Up Front) prompting cuts AI waffle: instruct the model to lead with the conclusion, then provide supporting detail - the opposite of how AI naturally responds.

- Format control instructions ("strictly JSON", "Markdown table only") eliminate the need for manual reformatting when moving data into business documents, spreadsheets, or presentations.

- Context engineering - curating the exact data passed to the AI - moves beyond simple phrasing to managing the model's entire attention budget, and is now [the standard approach](https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents) for professional deployments.

TTAI.uk Team

AI Research & Analysis Experts

Our team of AI specialists rigorously tests and evaluates AI agent platforms to provide UK businesses with unbiased, practical guidance for digital transformation and automation.

Stay Updated on AI Trends

Join 10,000+ UK business leaders receiving weekly insights on AI agents, automation, and digital transformation.

Related Articles

Google Gemini for UK Business 2026: The Complete Implementation Guide

Deep dive into Gemini's 1-million token context window, Deep Research mode, and multimodal prompting, and how UK businesses are leveraging these features for competitive intelligence.

Computer Use AI Agents: Automate Any Software Without APIs

Learn how agentic AI systems extend structured prompting into full autonomous workflows, the natural next step after mastering prompt engineering fundamentals.

What Is MCP (Model Context Protocol)? A UK Business Guide

Understand how Model Context Protocol standardises the way AI models receive context, the technical underpinning of advanced prompt engineering for agentic AI.

FCA AI Compliance & Consumer Duty: UK Fintech's 2026 Regulatory Guide

Critical reading for UK financial services professionals using AI, including how to prompt AI systems for FCA-compliant outputs and what CMA's new agentic AI guidance means for your firm.

📚 Explore More Resources

Recommended Tools

ClickUp

"One app to replace them all. Yes, even that messy one."

$12/month

Free plan

Affiliate Disclosure

Motion

"Your calendar, but actually intelligent."

$57/month

7-day trial

Affiliate Disclosure

Ready to Transform Your Business with AI?

Discover the perfect AI agent for your UK business. Compare features, pricing, and real user reviews.

📄 Sources & Research References (28 sources)

- [1] Office for National Statistics (ONS) (ons.gov.uk)

- [2] Acas survey (acas.org.uk)

- [3] 2025 techUK report (techuk.org)

- [4] Research from Anthropic (anthropic.com)

- [5] UC Berkeley Haas (newsroom.haas.berkeley.edu)

- [6] Codewave's Prompt Engineering Cheat Sheet (codewave.com)

- [7] context engineering (anthropic.com)

- [8] Portkey guide to CO-STAR (portkey.ai)

- [9] 2025 academic paper published on arXiv (arxiv.org)

- [10] Kerson AI on British communication and AI (kerson.ai)

- [11] ACAS-compliant (acas.org.uk)

- [12] HMRC's off-payroll working rules (IR35) (gov.uk)

- [13] Anthropic's prompt engineering documentation (platform.claude.com)

- [14] GDPR-compliant privacy notice (ico.org.uk)

- [15] Agentic AI (sdggroup.com)

- [16] a clear analysis of the CMA's guidance for businesses (lewissilkin.com)

- [17] GOV.UK guidance on complying with consumer law when using AI agents (gov.uk)

- [18] GOV.UK guidance on agentic AI and consumers (gov.uk)

- [19] Microsoft Copilot prompts guide (support.microsoft.com)

- [20] top 10 Copilot tips for M365 (microsoft.com)

- [21] Nettpilot Gemini business guide for 2026 (nettpilot.com)

- [22] Premium Sources (perplexity.ai)

- [23] March 2026 Perplexity update (perplexity.ai)

- [24] Salesforce's 2026 AI predictions report (salesforce.com)

- [25] Guidance on AI and data protection (ico.org.uk)

- [26] Free agent: new UK guidance on agentic AI for businesses (ashurst.com)

- [27] Prompt Engineering Best Practices 2026 (thomas-wiegold.com)

- [28] 10-Minute Competitor Research Using AI: The Complete Prompt Library (medium.com)